A/B testing has always depended on one thing: showing the same visitor the same variant consistently. For years, third-party cookies handled that job. However, with Safari and Firefox already blocking third-party cookies — and Chrome phasing them out — the old approach is broken. Fortunately, modern A/B testing without cookies isn’t just possible. It’s actually better.

This guide covers the tools, methods, and implementation strategies you need to run reliable split tests in a privacy-first world.

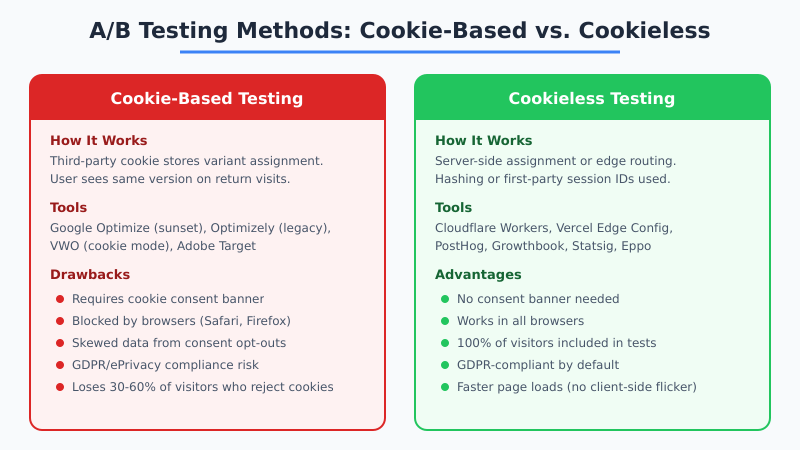

Why Cookie-Based A/B Testing Is Failing

Traditional A/B testing tools like the now-sunset Google Optimize relied on third-party cookies to assign visitors to test variants. The cookie stored a variant ID, and on return visits, the tool read that cookie to serve the same version. Simple and effective — until browsers started blocking cookies.

Here’s the current landscape:

- Safari: Blocks all third-party cookies by default since 2020. First-party cookies capped at 7 days via ITP.

- Firefox: Total Cookie Protection isolates cookies per site, breaking cross-site tracking.

- Chrome: Third-party cookie deprecation underway, with Privacy Sandbox as the replacement.

- EU regulations: The ePrivacy Directive requires consent before setting non-essential cookies, including A/B testing cookies.

Consequently, cookie-based testing now misses 30-60% of your visitors. Those who reject cookie banners or use blocking browsers simply don’t participate in your tests. That’s a massive sample size problem that invalidates results.

If you’re still weighing whether cookies are necessary for your analytics setup, read our breakdown on whether you really need a cookie banner.

Cookie-Based vs. Cookieless A/B Testing

The differences between these approaches go beyond just technical implementation. They affect data quality, compliance, and performance.

| Factor | Cookie-Based | Cookieless |

|---|---|---|

| Visitor coverage | 40-70% (consent-dependent) | 100% |

| Consent required | Yes (EU, UK, Brazil) | No (if no PII processed) |

| Browser compatibility | Limited (Safari, Firefox block) | Universal |

| Page load impact | Client-side flicker common | Zero flicker with edge/server |

| Variant consistency | Lost when cookies expire/clear | Depends on method |

| Implementation complexity | Low (drop-in script) | Medium (server/edge config) |

| Statistical validity | Compromised by opt-outs | Full sample size maintained |

Therefore, the move to cookieless testing isn’t just about compliance — it’s about getting better data.

5 Methods for A/B Testing Without Cookies

There’s no single approach to cookieless A/B testing. The right method depends on your tech stack, traffic volume, and testing complexity. Here are five proven approaches.

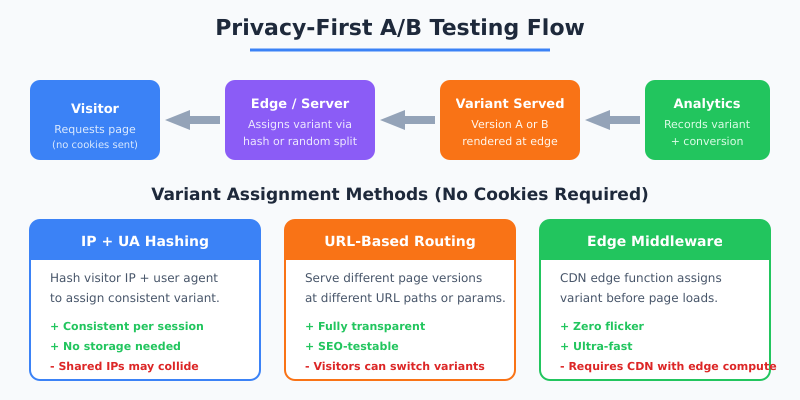

1. Server-Side Split Testing

The server assigns variants before the page renders. The assignment can use IP hashing, session IDs, or random allocation. Because the decision happens server-side, there’s no client-side flicker and no cookies needed.

Best for: Teams with backend access who run frequent tests. Works well with server-side analytics setups.

2. Edge-Based Testing (CDN Middleware)

CDN providers like Cloudflare, Vercel, and Fastly offer edge computing functions that intercept requests and serve different page variants. The assignment happens at the CDN level — milliseconds before the page loads.

Best for: High-traffic sites using modern CDNs. Delivers the fastest possible test execution.

3. URL-Based Split Testing

Instead of showing different content on the same URL, you create separate URLs for each variant (e.g., /pricing-a/ and /pricing-b/) and split traffic using server redirects or DNS-level routing.

Best for: Major page redesigns where variants differ significantly. Also useful for SEO split testing.

4. First-Party Session Storage

Use browser sessionStorage (not cookies) to maintain variant assignment during a single session. This doesn’t persist across sessions but keeps the experience consistent during one visit.

Best for: Simple front-end tests where cross-session consistency isn’t critical.

5. Feature Flags with Hashing

Feature flag platforms assign variants based on a hash of non-personal attributes (IP + user agent, or an anonymous session ID). The hash produces a deterministic result, meaning the same inputs always get the same variant.

Best for: Product teams already using feature flags for releases who want to add experimentation.

Privacy-First A/B Testing Tools

Several tools now offer cookieless A/B testing as a core feature. Here’s how the leading options compare:

| Tool | Cookieless Mode | Server-Side | Edge Support | Pricing |

|---|---|---|---|---|

| PostHog | Yes | Yes | No | Free tier available |

| GrowthBook | Yes | Yes | Yes | Free (open source) |

| Statsig | Yes | Yes | Yes | Free tier available |

| Eppo | Yes | Yes | Yes | Enterprise pricing |

| VWO | Server-side only | Yes | No | Starts at $99/mo |

| Optimizely | Server-side only | Yes | Yes | Enterprise pricing |

Moreover, open-source options like GrowthBook deserve special attention. They let you self-host the experimentation platform, giving you full control over data without any third-party processing.

Implementing Server-Side A/B Tests: A Step-by-Step Approach

Server-side testing is the most common cookieless method. Here’s how to implement it:

- Define your hypothesis. What are you testing, and what metric determines the winner? Be specific: “Changing the CTA button color from blue to green will increase click-through rate by 5%.”

- Set up variant assignment. Use a hashing function that takes a non-personal identifier (like a request fingerprint) and deterministically assigns a variant. Most feature flag SDKs handle this automatically.

- Render variants server-side. Your application code checks the assigned variant and renders the appropriate version before sending HTML to the browser.

- Track conversions. Send conversion events to your analytics platform with the variant identifier attached. Privacy-first tools like Plausible support custom event properties for this purpose.

- Calculate statistical significance. Use a Bayesian or frequentist calculator to determine when you have enough data to declare a winner. Don’t peek at results early.

Furthermore, always run an A/A test first (same content, two groups) to validate that your assignment mechanism distributes traffic evenly.

Measuring A/B Test Results Without Cookies

Conversion tracking without cookies requires a different approach to measurement. Here’s what changes:

- Session-level attribution replaces user-level. You measure conversions per session rather than per unique user. This is more privacy-friendly and still statistically valid for most tests.

- Server-side event tracking. Send conversion events from your backend rather than relying on client-side pixels. This captures 100% of conversions regardless of ad blockers.

- UTM-based segmentation. Combine A/B test data with UTM parameters to understand how different traffic sources respond to variants.

- Aggregate statistics only. Calculate conversion rates, confidence intervals, and effect sizes on aggregate data — no individual user profiles needed.

For a comprehensive look at tracking conversions without third-party cookies, see our detailed guide on cookieless conversion tracking.

Edge-Based Testing with Cloudflare Workers

Edge-based A/B testing is becoming the preferred method for performance-sensitive sites. Here’s why — and a simplified implementation example:

Cloudflare Workers run JavaScript at the CDN edge, intercepting requests before they reach your origin server. You can modify the response to serve different page variants.

// Simplified Cloudflare Worker A/B test

export default {

async fetch(request) {

const url = new URL(request.url);

// Hash-based variant assignment (no cookies)

const ip = request.headers.get('CF-Connecting-IP');

const hash = await hashString(ip + url.pathname);

const variant = hash % 2 === 0 ? 'control' : 'treatment';

// Fetch appropriate variant

const variantUrl = variant === 'treatment'

? url.pathname + '?variant=b'

: url.pathname;

const response = await fetch(variantUrl);

const modifiedResponse = new Response(response.body, response);

modifiedResponse.headers.set('X-Variant', variant);

return modifiedResponse;

}

}In other words, the entire test runs before the visitor’s browser even receives the page. No flicker, no cookies, no consent required.

Statistical Considerations for Cookieless Tests

Running A/B tests without cookies changes some statistical assumptions. Here’s what to account for:

| Factor | Cookie-Based | Cookieless | Impact |

|---|---|---|---|

| Unit of analysis | User (cookie ID) | Session or request | Larger sample size needed |

| Return visitor handling | Same variant guaranteed | May vary by method | Use hash-based assignment |

| Test duration | Longer (user-level metrics) | Shorter (more data points) | Faster time to significance |

| Sample size | Reduced by opt-outs | Full traffic included | More reliable results |

Consequently, cookieless tests often reach statistical significance faster because they include 100% of traffic instead of only the visitors who accepted cookies.

Best Practices for Privacy-First Experimentation

To get the most from cookieless A/B testing, follow these guidelines:

- Test one variable at a time. Multivariate tests require exponentially more traffic. Start with simple A/B splits.

- Set your sample size in advance. Use a sample size calculator to determine how long to run the test before you start.

- Don’t stop tests early. Peeking at results and stopping when you see a winner inflates false positive rates.

- Document everything. Record your hypothesis, variant descriptions, traffic allocation, start date, and success metrics before launching.

- Consider the full conversion funnel. A variant that wins on click-through rate might lose on final conversion rate. Measure what matters for revenue.

- Use server-side or edge assignment. Client-side methods still risk flicker and can be blocked by privacy tools.

Additionally, always validate your testing setup with an A/A test. If your A/A test shows a statistically significant difference, your assignment mechanism has a bug.

Moving Forward Without Cookies

The era of cookie-dependent A/B testing is ending. However, this transition actually improves experimentation quality. You get larger sample sizes, faster results, better compliance, and zero page-load impact with server-side or edge-based approaches.

Start by evaluating open-source tools like GrowthBook or free tiers from PostHog and Statsig. Implement server-side assignment using IP hashing or anonymous session IDs. Track conversions through your privacy-first analytics platform. The tools exist, the methods are proven, and the results speak for themselves.

Before making any changes to your analytics stack, review the analytics migration checklist to ensure a smooth transition.